This FAQ answer is an excerpt from SNIPPETS by Bob Stout.

Don't hold your breath. Think about it… For a decompiler to work properly, either 1) every compiler would have to generate substantially identical code, even with full optimization turned on, or 2) it would have to recognize the individual output of every compiler's code generator.

If the first case were to be correct, there would be no more need for compiler benchmarks since every one would work the same. For the second case to be true would require in immensely complex program that had to change with every new compiler release.

So what about specific decompilers for specific compilers – say a decompiler designed to only work on code generated by, say, BC++ 4.5? This gets us right back to the optimization issue. Code written for clarity and understandability is often inefficient. Code written for maximum performance (speed or size) is often cryptic (at best!) Add to this the fact that all modern compilers have a multitude of optimization switches to control which optimization techniques to enable and which to avoid. The bottom line is that, for a reasonably large, complex source module, you can get the compiler to produce a number of different object modules simply by changing your optimization switches, so your decompiler will also have to be a deoptimizer which can automagically recognize which optimization strategies were enabled at compile time.

Let's simplify further and specify that you only want to support one specific compiler and you want to decompile to the most logical source code without trying to interpret the optimization. What then? A good optimizer can and will substantially rewrite the internals of your code, so what you get out of your decompiler will be, not only cryptic, but in many cases, riddled with goto statements and other no-no's of good coding practice. At this point, you have decompiled source, but what good is it?

Also note carefully my reference to source modules. One characteristic of C is that it becomes largely unreadable unless broken into easily maintainable source modules (.C files). How will the decompiler deal with that? It could either try to decompile the whole program into some mammoth main() function, losing all modularity, or it could try to place each called function into its own file. The first way would generate unusable chaos and the second would run into problems where the original source hade files with multiple functions using static data and/or one or more functions calling one or more static functions. A decompiler could make static data and/or functions global but only at the expense or readability (which would already be unacceptable).

Finally, remember that commercial applications often code the most difficult or time-critical functions in assembler which could prove almost impossible to decompile into a C equivalent.

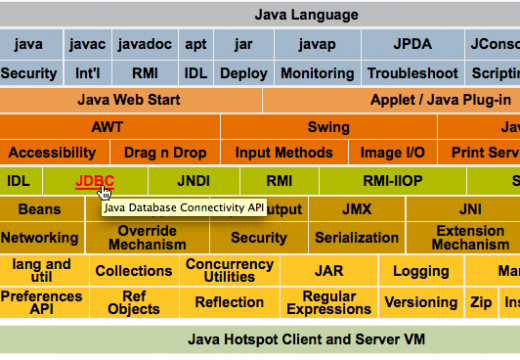

Like I said, don't hold your breath. As technology improves to where decompilers may become more feasible, optimizers and languages (C++, for example, would be a significantly tougher language to decompile than C) also conspire to make them less likely.

For years Unix applications have been distributed in shrouded source form (machine but not human readable — all comments and whitespace removed, variables names all in the form OOIIOIOI, etc.), which has been a quite adequate means of protecting the author's rights. It's very unlikely that decompiler output would even be as readable as shrouded source.

Update: Decompiler technology is still very difficult, but significant advances have been made since this was written.

Read What is a decompiler? for updated information on decompiler projects.

Follow Us!